The era of defaulting to a trillion-parameter behemoth for every AI task is officially over. For years, the narrative has been that bigger is always better, leading to massive, power-hungry Large Language Models (LLMs) locked away in centralized data centers. But the real revolution happening in 2026 isn’t in the cloud—it’s at the edge. The biggest paradigm shift hitting developers, businesses, and indie hackers right now is the pivot toward Small Language Models (SLMs) and “Physical AI.” Lightweight models are migrating AI out of expensive server farms and integrating it directly into the wild: onto our smartphones, factory floors, and 5G network towers.

If you’re building applications today, relying solely on an API call to a distant server is no longer the smartest—or cheapest—path forward. The future is local, fast, and remarkably small.

The Myth That “Bigger is Always Better”

Not long ago, the tech industry was caught in a parameter arms race. But running massive LLMs comes with painful, unavoidable trade-offs: extreme inference costs, glaring latency issues, and absolute dependency on high-speed internet.

Enter the hero of 2026: The Small Language Model (SLM).

SLMs are highly optimized, purpose-built models designed to punch above their weight class. By training on higher-quality, curated datasets rather than scraping the entire internet, models like Microsoft’s Phi-4-mini and Google’s Gemma 3n can handle complex, multimodal reasoning tasks entirely on consumer hardware. We’ve hit an inflection point where you can run a highly capable SLM locally on an Apple M4 Mac or a cheap VPS without breaking a sweat—or your budget.

Visualizing the Edge Computing Paradigm. Source Link · Wikimedia Commons

What is “Physical AI” and Why is it Trending?

The concept of “Physical AI” refers to artificial intelligence that interacts with and understands the physical world in real-time. It requires vision, spatial reasoning, and split-second decision-making—all tasks where a 2-second cloud round-trip latency is unacceptable.

Just days ago, NVIDIA and T-Mobile announced a massive partnership aimed at integrating Physical AI directly into 5G edge networks. This means that instead of a drone or an autonomous factory robot sending its camera feed back to a centralized cloud for processing, specialized AI agents evaluate that data locally at the cell tower or on the device itself.

This enables autonomous systems to operate safely and effectively without constant, high-bandwidth cloud connectivity. If a factory robot detects a safety hazard, it doesn’t need to ask a server 500 miles away for permission to stop; the local SLM has already made the call.

A Simple Edge AI Inference Example

For a developer, running an SLM at the edge is easier than ever. Here is a basic example of how you might script a local inference task using a lightweight model framework in Python:

# bash

# Install an edge inference library like llama.cpp

pip install llama-cpp-python

# python

from llama_cpp import Llama

# Load an optimized SLM directly onto local hardware (e.g., Apple M4 or Edge device)

# 4-bit quantization (q4_k_m) ensures the model fits into constrained memory

llm = Llama(

model_path="./models/phi-4-mini-q4_k_m.gguf",

n_ctx=2048,

verbose=False # Suppress verbose C++ backend logging in production

)

def monitor_sensor_data(sensor_input: dict) -> bool:

"""

Evaluates local sensor data for anomalies using a strict system prompt.

"""

# 1. Robust Prompting: Explicitly instruct the model on the exact output required.

system_prompt = (

"You are an industrial safety AI. Analyze the sensor data. "

"If the temperature exceeds 80 or vibration is above 0.04, output exactly 'ANOMALY DETECTED'. "

"Otherwise, output exactly 'NORMAL'. Do not provide explanations or any other text."

)

user_prompt = f"Sensor reading: {sensor_input}"

full_prompt = f"{system_prompt}\n\n{user_prompt}\nResult:"

# 2. Realistic Inference: Runs locally. Latency on modern edge hardware

# will typically be in the 50-200 millisecond range, not sub-millisecond.

response = llm(

full_prompt,

max_tokens=10, # Keep token generation tiny for maximum speed

stop=["\n"],

echo=False,

temperature=0.0 # Set to 0.0 for strict, deterministic classification

)

# 3. Safe Extraction: Strip whitespace to avoid matching errors

output_text = response['choices'][0]['text'].strip()

if output_text == "ANOMALY DETECTED":

# trigger_local_alert()

return True

return False

# Example usage for a continuous local data feed

# is_anomaly = monitor_sensor_data({"temp": 85, "vibration": 0.05})

# print(f"Alert Status: {is_anomaly}")

The ROI and Security Argument for Businesses

For enterprise operations, Edge AI has rapidly moved from a proof-of-concept to real-world deployment.

- Zero Exposure to Cloud Breaches: The biggest hurdle for enterprise AI adoption has historically been data privacy. By deploying SLMs locally, businesses keep their proprietary data—whether it’s manufacturing schematics, financial documents, or real-time patient analytics—entirely on-premise. No cloud round-trips mean no exposure to third-party data breaches.

- Drastically Reduced Costs: Replacing millions of API calls to massive proprietary LLMs with a locally hosted SLM slashes compute and API costs. Companies don’t need a trillion-parameter genius to monitor a retail checkout line or read a pressure valve gauge.

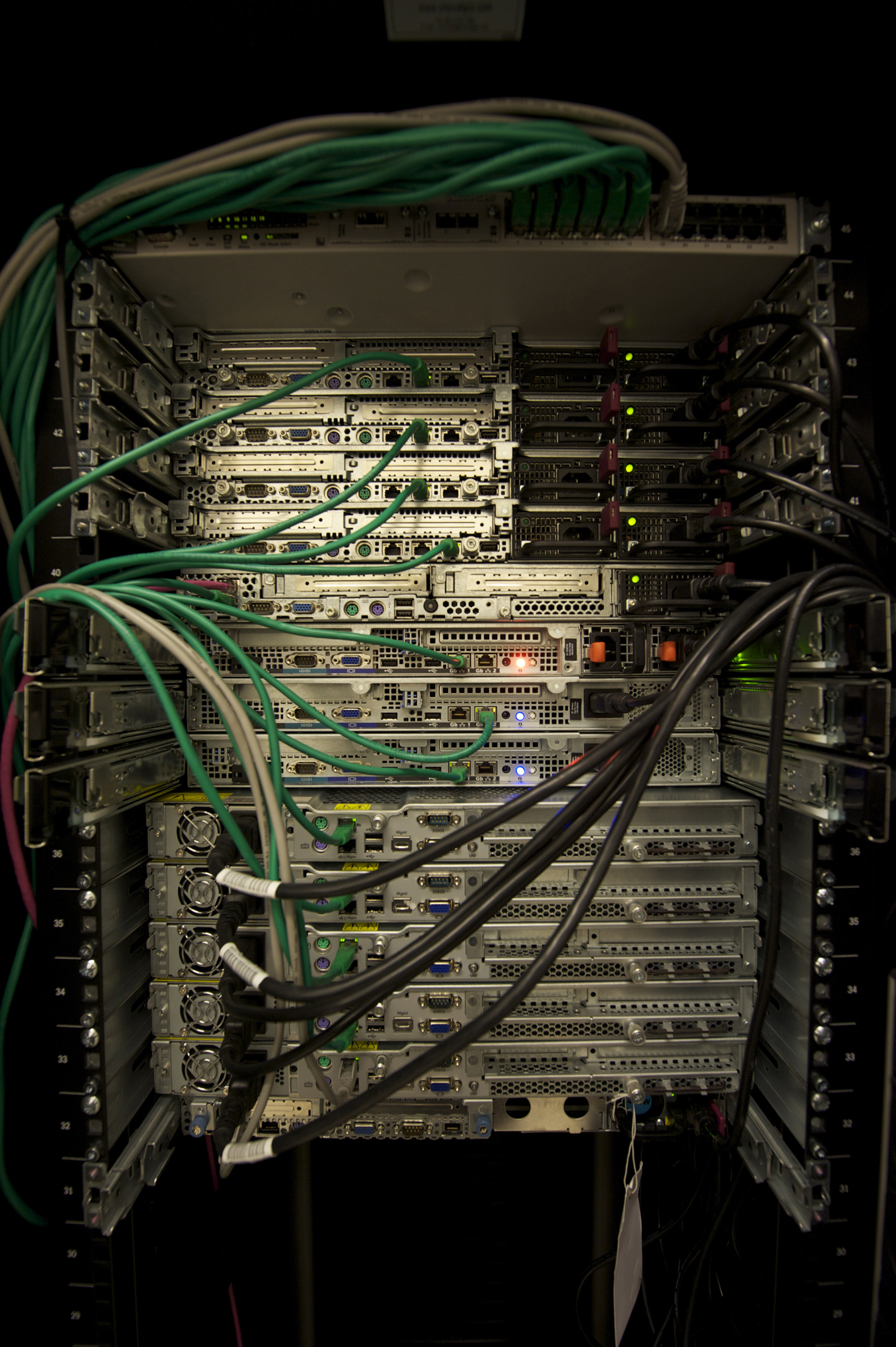

Local servers and edge networks replace distant data centers for edge AI. Source Link · Wikimedia Commons

Local servers and edge networks replace distant data centers for edge AI. Source Link · Wikimedia Commons

The Greener Path Forward

We cannot ignore the sustainability crisis in tech. Data center energy demands have skyrocketed, putting massive strain on global power grids.

The industry is increasingly pushing SLMs as the “greener path” for applied AI. By matching the size of the model to the complexity of the task, we avoid burning vast amounts of electricity to answer simple queries. Deploying a highly efficient SLM for a targeted task—like summarizing an offline document on a smartphone—reduces carbon footprints and eases the strain on global power grids. This continues the 2026 trend toward efficiency, proving that we can scale AI capabilities without scaling our carbon footprint at the same unsustainable rate.

Conclusion: The Future is Small and Local

We are entering an era where AI becomes invisible, omnipresent, and deeply integrated into our immediate physical environments. The reliance on centralized, lagging cloud infrastructure is being actively replaced by smart, capable, and cheap Small Language Models that live in our pockets, on our factory lines, and atop our cell towers.

For everyday users and businesses, this means AI will just work—faster, safer, and completely behind the scenes in their devices.

Sources

- NVIDIA, T-Mobile and Partners Integrate Physical AI Applications on AI-RAN Ready Infrastructure

- Edge AI infrastructure reaches real-world inflection point

- Why small language models may be the greener path for applied AI

- Are small language models finally having their moment?

- The Best Open Source Small Language Models

- Enabling small language models to solve complex reasoning tasks

- How Small Language Models Are Key to Scalable Agentic AI

- The Rise of Small Language Models in Enterprise AI

- The Power of Small Language Models

- Small language models (SLM) scaling Edge/Factory AI

- Edge AI: 2026 update

- The Future of AI Inference is Smarter Local Compute

- On-device AI inference paper